AI Chatbots for Business: Benefits, Costs & Implementation Guide

For the better part of a decade, businesses naively deployed 'chatbots' onto their landing pages that were fundamentally nothing more than glorified, exceptionally frustrating IF/THEN decision trees. The logic was deeply archaic: 'If the customer explicitly types word X, force them to read pre-written response Y.' The inevitable result was an abysmal, infuriating user experience that almost universally ended with the highly agitated customer screaming for a human agent, heavily damaging brand trust in the process.

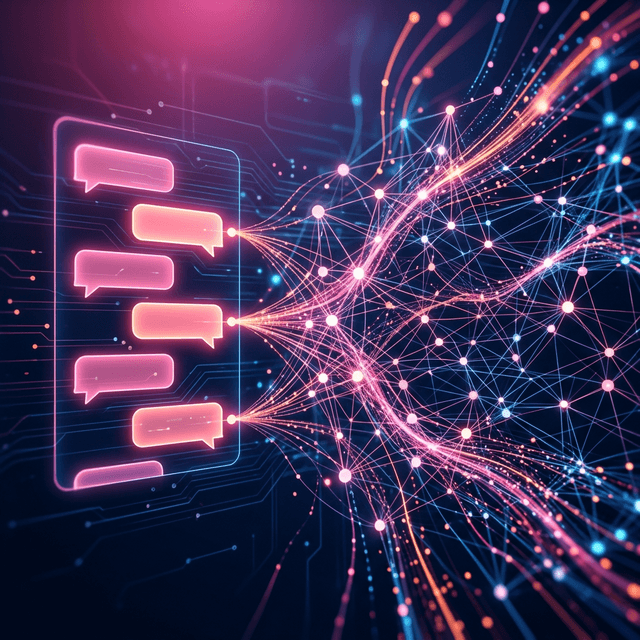

The profound breakthrough in advanced Large Language Models (LLMs) has fundamentally and irreversibly rewritten modern customer service logistics. Modern, enterprise-grade conversational AI absolutely does not follow rudimentary scripts. It deeply interprets nuanced human intent, accurately analyzes emotional sentiment, logically deduces complex multi-part queries, and dynamically formulates highly novel, deeply contextual responses based strictly on your company's private internal documentation.

A highly common, incredibly expensive misconception among executives is the belief that to make an AI model fundamentally 'understand' your highly specific business, you literally must spend $500,000 to custom-train a foundational LLM entirely from scratch. The highly elegant, massively cost-effective engineering answer is RAG: Retrieval-Augmented Generation.

At Renshok, our AI engineers construct custom data ingestion pipelines that actively consume all of your existing corporate assets—massive dense PDFs, thousands of past resolved Zendesk support tickets, highly technical proprietary product manuals, and internal employee wiki pages—and rigorously mathematically convert them into high-dimensional vector embeddings, storing them in specialized databases like Pinecone or pgvector.

When a live customer organically asks a highly specific question, our proprietary system instantaneously interrogates this vector database, mathematically retrieves only the top three most highly relevant text snippets regarding that exact query, and dynamically injects those literal facts directly into the underlying LLM's system prompt in milliseconds. The LLM then beautifully generates a conversational answer utilizing strictly your verified corporate facts, completely and mathematically neutralizing the dreaded risk of AI 'hallucinations'.

| Core AI Feature | Renshok Custom RAG Architecture | Legacy 'Chatbot' Plugin |

|---|---|---|

| Contextual Understanding | Deep Retrieval-Augmented Generation (RAG) | Highly brittle, exact Keyword string matching |

| Autonomous Actions | Secure Database Function Execution | Can strictly only provide hardcoded static HTML links |

| Corporate Data Security | Renshok Default Zero-Trust API Architecture | Vulnerable legacy unauthenticated payloads |

| Compute Scalability | Infinite Vercel/AWS Serverless Edge Compute | Frequent crashes during holiday traffic spikes |

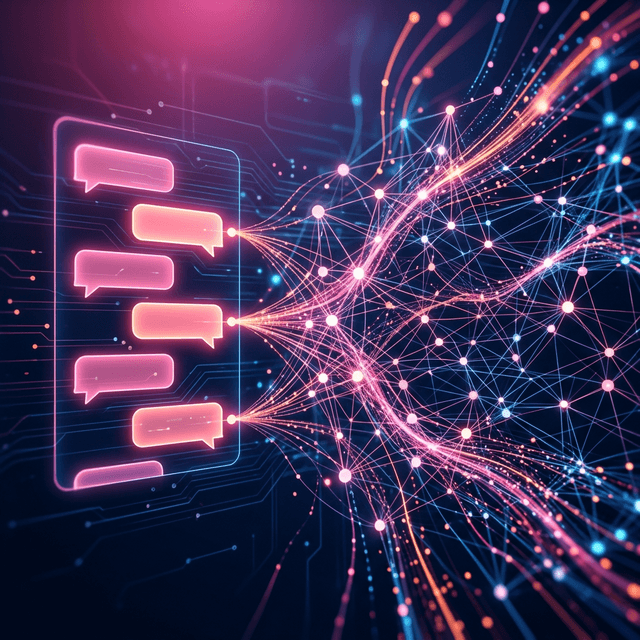

Answering complex user questions accurately is undeniably helpful, but actively taking programmatic physical action on behalf of the user is functionally revolutionary. At Renshok, we engineer highly advanced AI agents strictly equipped with tightly restricted 'Function Calling' programmatic abilities.

If a verified user interactively asks, 'Where is my exact order, and can you expedite the shipping over the weekend?', the AI doesn't just read an FAQ. It securely and autonomously formats a strictly typed API query, heavily authenticates itself against your internal Postgres inventory database via a restricted IAM token, instantaneously retrieves the live GPS tracking status, calculates the exact mathematical shipping rate difference for expedited freight, heavily validates the user's stored credit card via Stripe, dynamically executes the complex payment upgrade, and successfully informs the user of the newly upgraded delivery date—entirely autonomously, in roughly 1200 milliseconds.

Stop heavily frustrating your highly valuable clients. Seamlessly integrate highly secure, completely hallucination-free AI routing agents directly into your customer-facing portals. Partner tightly with the Renshok engineering division today to construct highly intelligent, unyielding automated support ecosystems.

Deep-dive answers into the architecture, security, and integration logic discussed in this briefing.

Partner with Renshok Software Solutions to build exceptional, scalable digital products. Whether you are scaling across India or expanding globally, our expert engineering team is ready to bring your vision to life.