How AI Is Transforming Small & Mid-Sized Businesses in 2026

A few years ago, we had a candid conversation with a mid-sized logistics firm operating out of Mumbai. They were drowning in their own success. Their top-line revenue was soaring, but their operational complexities had scaled linearly with that growth. Every new enterprise client meant hiring three new data entry clerks. Every new logistics route meant a spike in localized dispatchers. They initially approached us at Renshok asking for a custom ERP solution to 'manage the chaos.' We told them that managing chaos was the wrong objective; the goal had to be eradicating it entirely through intelligent automation.

As we navigate deeper into 2026, the global technological landscape has fundamentally shifted. Artificial intelligence is no longer the exclusive playground of Fortune 500 conglomerates with bottomless R&D budgets. The democratization of large language models (LLMs) and distributed serverless compute has shattered the barrier to entry. But here is the critical engineering truth: AI is not magic. It is, at its core, a highly advanced data processing layer. And for scaling businesses, failing to integrate this layer is no longer just a missed opportunity—it is an existential operational risk.

When a business relies exclusively on human labor for routine data routing, it artificially caps its own growth velocity. Humans are not natively designed to function as raw data parsers. They get exhausted, they inevitably make transcription errors, and they scale horribly. The real cost of ignoring AI integration isn't just the capital spent on bloated administrative payrolls; it's the sheer lack of momentum. By the time a traditional business manually analyzes a supply chain bottleneck on a spreadsheet, their tech-enabled competitor has already algorithmically bypassed it.

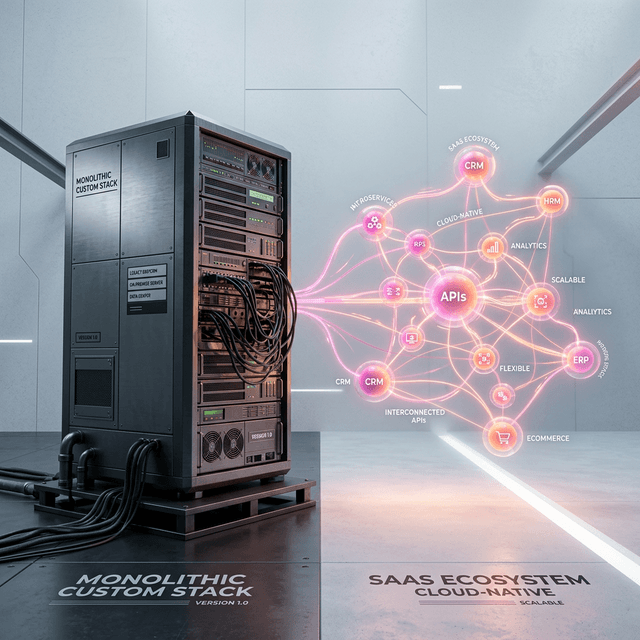

One of the most pervasive, destructive anti-patterns we observe when auditing legacy architectures is the reliance on what we refer to as the 'Human API.' This occurs when a company tapes together disjointed SaaS platforms by having employees manually download a CSV from System A, manipulate it, and manually upload it into System B. It is slow, highly susceptible to fat-finger errors, and catastrophically expensive over a standard three-year horizon.

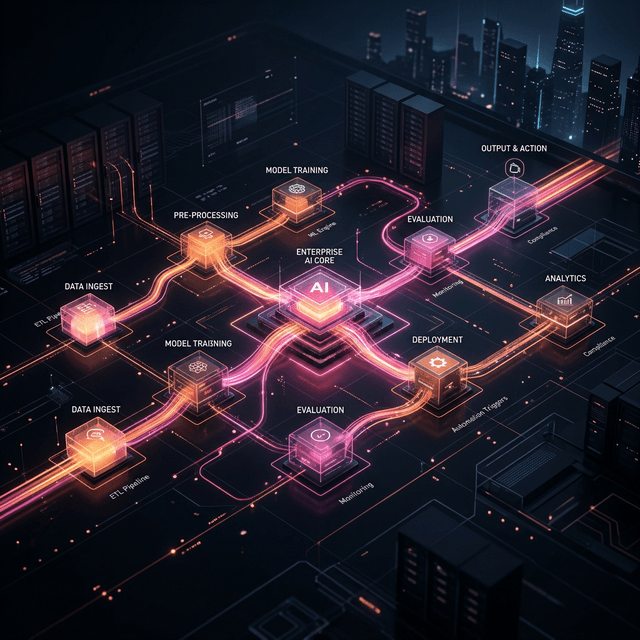

Integrating secure AI pipelines fundamentally destroyed the Human API. We architect bespoke middleware—often utilizing Node.js or Go microservices deployed globally on edge networks—that leverage machine learning to parse unstructured data in real-time. Consider a B2B scenario where a customer emails a highly complex, non-standardized purchase order PDF. Traditionally, an account manager reads the PDF, deciphers the intent, checks warehouse inventory manually on a legacy portal, and types up a formal invoice.

In a fully modernized architecture, an AI pipeline securely ingests that incoming email webhook. It extracts the semantic intent via natural language processing, validates the requested SKUs precisely against a live PostgreSQL database, reserves those physical items using atomic database transactions, and autonomously replies to the client with a localized payment link. This entire complex operation concludes in roughly 200 milliseconds, requires absolutely zero human intervention, and easily scales to thousands of concurrent requests.

The vast majority of mid-sized organizations attempt to drive forward while staring strictly in the rearview mirror. Their entire analytics framework relies on logging historical events: 'We fulfilled X orders last quarter' or 'Our SaaS churn rate hit Y percent last month.' A historical dashboard merely informs you of what already broke. Predictive intelligence, on the other hand, flags what is about to fracture before the actual breakage occurs.

This paradigm shift represents the most profound commercial impact of custom AI development. By securely piping raw unified telemetry data into a structured vector database and laying a predictive supervised model over it, we force the company into an intensely proactive posture. A precision manufacturing firm no longer waits for an automated lathe to fail on the assembly line; the temperature covariance algorithms flag a subtle thermal anomaly 72 hours in advance and aggressively dispatch a localized maintenance routine without human prompting.

For a growing B2B SaaS startup, the backend infrastructure analyzes live user session behavior—monitoring API latency, feature utilization drop-offs, and login frequency—to isolate user cohorts that are statistically 85% likely to cancel their subscriptions within the next month. It then autonomously triggers a highly personalized, dynamic re-engagement sequence. This isn't theoretical white-paper science fiction; this is the standard operating architecture we deploy for Renshok clients globally.

The ultimate promise of digital transformation is achieving decoupled scalability. Historically, if a professional services or retail company wished to double its output, it was forced to roughly double its staff. The profit margin remained structurally identical and often shrank under the weight of increased management overhead. When a company injects deep automation into its operational backbone, it completely severs that linear relationship. A highly localized entity can aggressively expand into multiple international markets with their existing lean core team.

Realizing this vision requires intense systemic modernization. You cannot simply slap a conversational AI wrapper onto a twenty-year-old monolithic application and realistically expect enterprise-grade performance. It mandates a deliberate, decoupled approach to software topology. At Renshok, we strongly advocate for migrating legacy workloads to serverless containerization grids. If your AI-enabled application needs to instantly process a massive dataset for a user connected in Singapore, the compute function must inherently execute at a datacenter located in Singapore, not travel latency-heavy global hops back to a saturated US-East server.

| Operational Vector | The Legacy 'Human API' Baseline | Renshok AI-Native Architecture |

|---|---|---|

| Data Pipeline Latency | Manual execution ranging from hours to days | Executed autonomously in sub-200 milliseconds |

| Scaling Friction | High (Requires physical onboarding, training & HR) | Zero (Auto-scaling serverless containers handle spikes) |

| Error Handling | Manual mistakes compound, leading to systemic risk | Strict code validation logic with exponential retries |

| Knowledge Silos | Business logic is lost when critical employees exit | Domain logic is encoded permanently into the architecture |

As a specialized Indian engineering group architecting robust solutions for global enterprises, we maintain a highly transparent vantage point on the digital economy. We routinely witness the staggering volume of redundant, soul-crushing manual processes that paralyze supposedly 'modern' organizations across North America, Europe, and Asia. Conversely, we witness the explosive competitive advantage unlocked when a decisive executive team resolves to burn their convoluted legacy systems to the ground and build cleanly.

Deploying AI at scale is fundamentally not about downloading a trending software tool. It represents a ground-up rewiring of how your company actually functions. It assumes strict data hygiene protocols, sophisticated zero-trust cloud security models, and a partnered engineering team that deeply understands how to write unyielding, type-safe backend environments capable of gracefully handling millions of concurrent, autonomous events.

The companies that will dominate their respective sectors over the next decade will absolutely not be the largest by employee count. They will definitively be the leanest—small, highly augmented human teams operating alongside custom AI logic, executing strategies at a velocity their legacy competitors simply cannot trace. At Renshok Software Solutions, we don't just speak abstractly about this emerging future; we engineer the precise architectural pipelines that guarantee it.

Deep-dive answers into the architecture, security, and integration logic discussed in this briefing.

Partner with Renshok Software Solutions to build exceptional, scalable digital products. Whether you are scaling across India or expanding globally, our expert engineering team is ready to bring your vision to life.